AI policy Australian universities enforce in 2026 has become one of the most consequential issues facing students today. Three years ago, most institutions had no formal position on generative AI. By 2026, that gap has been filled with structured frameworks, disclosure requirements, and active misconduct enforcement carrying real academic consequences.

The shift happened fast. When ChatGPT crossed a hundred million users in early 2023, Australian universities faced an immediate problem with no ready answer. Students were already using generative AI for assignments. Policies either didn’t exist or were buried in outdated academic integrity documents written long before large language models became part of everyday student life.

The result is three major institutions that have each built a distinctly different approach. The AI policy Australian universities like USYD, UNSW, and the University of Melbourne have developed reflects genuinely different educational philosophies — not just different rule sets. This guide breaks down what each university’s policy actually says, how strictly it’s enforced, and what it means for students on a day-to-day basis. For students navigating similar questions elsewhere, our breakdown of AI in UK academia covers how British universities are handling the same challenge.

Why AI Policy at Australian Universities Changed Everything in 2025

The 2023 ChatGPT Panic That Forced Universities to Act

The speed at which generative AI entered Australian classrooms caught administrators off guard. ChatGPT reached a hundred million users faster than any consumer application in history — and within weeks, academics were reporting submissions that argued too cleanly and cited sources that didn’t exist.

The immediate response was predictable: default to prohibition. Course guides were updated with one-line additions. Turnitin’s AI detection rolled out. The AI policy Australian universities scrambled to build in 2023 was reactive, inconsistent, and largely unenforceable. Detection tools flagged statistically likely AI-generated text, but false positive rates were high enough that no institution could rely on scores as standalone evidence. Non-native English speakers were disproportionately affected. Several universities quietly walked back their dependence on detection scores entirely.

By 2024, blanket bans weren’t sustainable. Formal frameworks emerged. And by 2025, the AI policy Australian universities had built carried real structure — and real consequences.

What “Academic Integrity” Actually Means in the AI Era

AI doesn’t copy existing text — it produces new text on demand, tailored to any topic. That’s a fundamentally different challenge from plagiarism detection. The debate shifted from “did this student copy?” to “what level of AI involvement is academically acceptable?”

USYD, UNSW, and Melbourne each answered that question differently. Understanding those differences is the starting point for navigating AI policy at Australian universities in 2026. Students who struggle with source acknowledgment alongside these AI rules can refer to our guide on common referencing mistakes for practical guidance.

How We Evaluated AI Policy Strictness (Our Methodology)

Calling any university policy “strict” without a clear basis is just opinion. To make this comparison of AI policy at Australian universities meaningful, each institution was evaluated against six specific criteria applied consistently across all three.

6 Criteria Used to Rank Each University

1. AI permitted in general coursework Does the default position allow generative AI in take-home assignments and essays — or does the AI policy Australian universities set start from restriction?

2. AI permitted in formal exams Are students allowed AI tools in supervised, invigilated settings?

3. Disclosure requirements Is acknowledging AI use mandatory — and how specific must that acknowledgment be?

4. Enforcement mechanisms How does each university investigate suspected AI misuse — detection software, verbal examination, human review, or a combination?

5. Penalty severity What are the documented consequences for confirmed misuse, from first offence through to repeat violations?

6. Instructor control How much authority do individual coordinators have over AI rules — and does that create consistency or confusion?

The stricter the default position, the heavier the enforcement, and the more severe the penalties — the higher the strictness ranking. The ranking reflects the overall policy environment a student actually encounters, not just what the formal document says on paper. Understanding how to submit work correctly within these frameworks is equally important — covered in our guide on how to structure a university assignment properly.

University of Sydney (USYD) AI Policy Explained

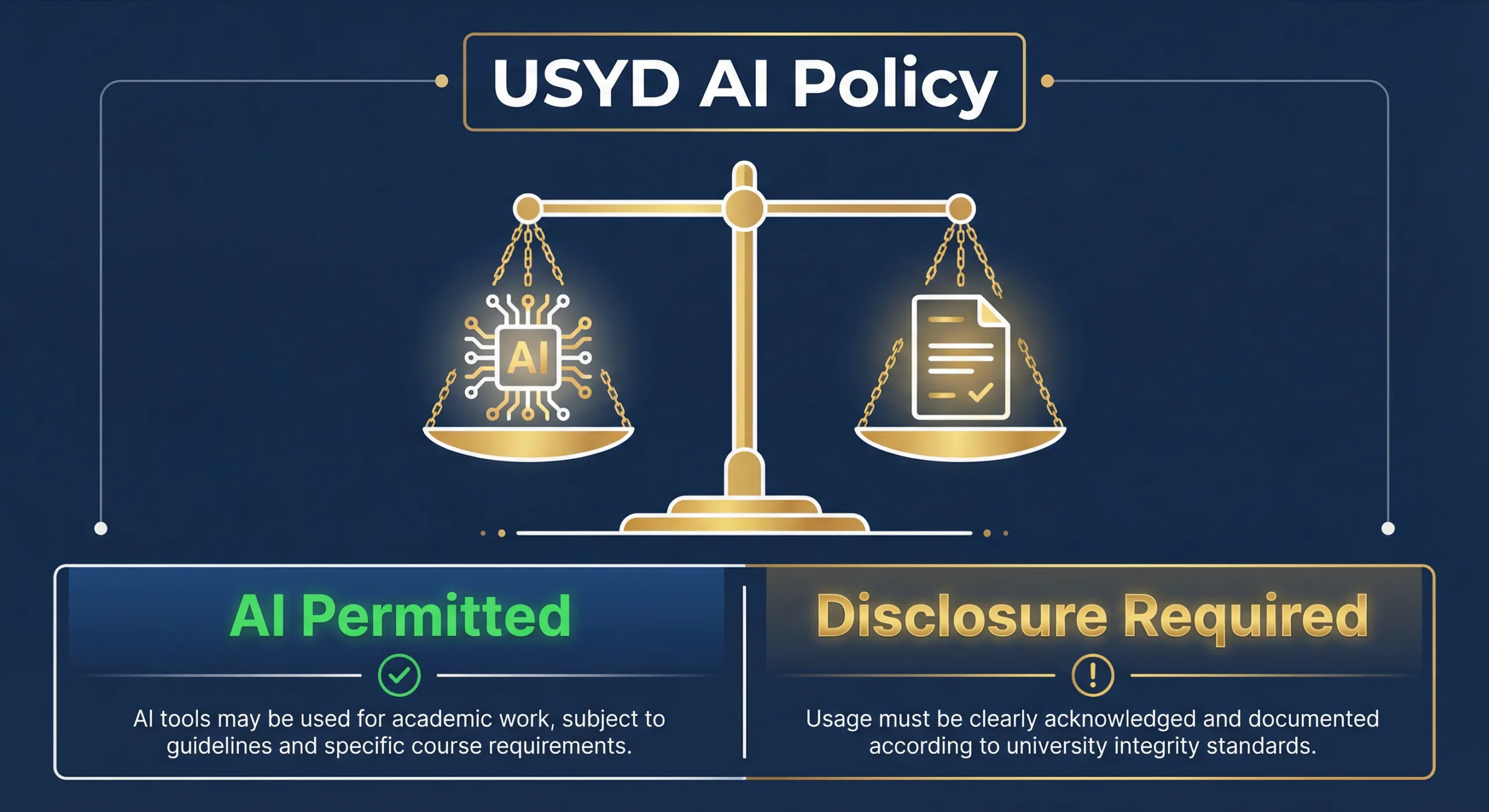

Of the three universities compared here, USYD has built the most structurally transparent approach to AI policy. Rather than leaving permissions ambiguous, USYD introduced a formal two-lane system that applies across the institution. The official USYD AI policy is documented at sydney.edu.au.

The Two-Lane Assessment System

USYD divides all assessments into two categories, and AI rules follow directly from which category an assessment falls into.

Lane one covers “secure assessments” — in-person exams, supervised tests, invigilated tasks. Generative AI is prohibited unless a coordinator has explicitly stated otherwise in writing. Lane two covers “open assessments” — take-home essays, research projects, unsupervised tasks. In open assessments, generative AI is permitted — with full, documented disclosure attached.

The two-lane structure matches the rule to the actual risk level of the assessment type. Among the AI policy Australian universities have developed, USYD’s is the most honest acknowledgment that AI exists and students will use it.

USYD AI Disclosure Rules: What Students Must Do

Disclosure at USYD is documentation, not a checkbox. Students must identify the tool and version used, name the publisher, provide the URL, describe how it was used, and in some cases provide actual prompt logs. Hiding AI use in an open assessment constitutes academic misconduct — the same category as plagiarism. Our guide on common referencing mistakes covers acknowledgment obligations that apply alongside these AI rules.

USYD AI Policy for Exams vs Assignments

In formal exams: AI banned, no exceptions unless coordinator specifies in writing. In open assignments: AI permitted with full disclosure. USYD’s publicly stated position is that graduates enter a workforce where AI is standard — the goal is AI literacy, not prohibition.

Penalties for AI Misuse at USYD

Undisclosed AI use in open assessments, or any AI use in secure assessments, goes through USYD’s standard academic integrity process. Consequences range from formal warnings and grade penalties through to course failure and suspension for serious or repeat violations.

UNSW AI Policy Explained

UNSW has built the most elaborate and systematically enforced approach among the AI policy Australian universities have developed. Where USYD works with two lanes, UNSW operates a six-level classification system applied to every single assessment across the university. The official UNSW AI policy is documented at unsw.edu.au.

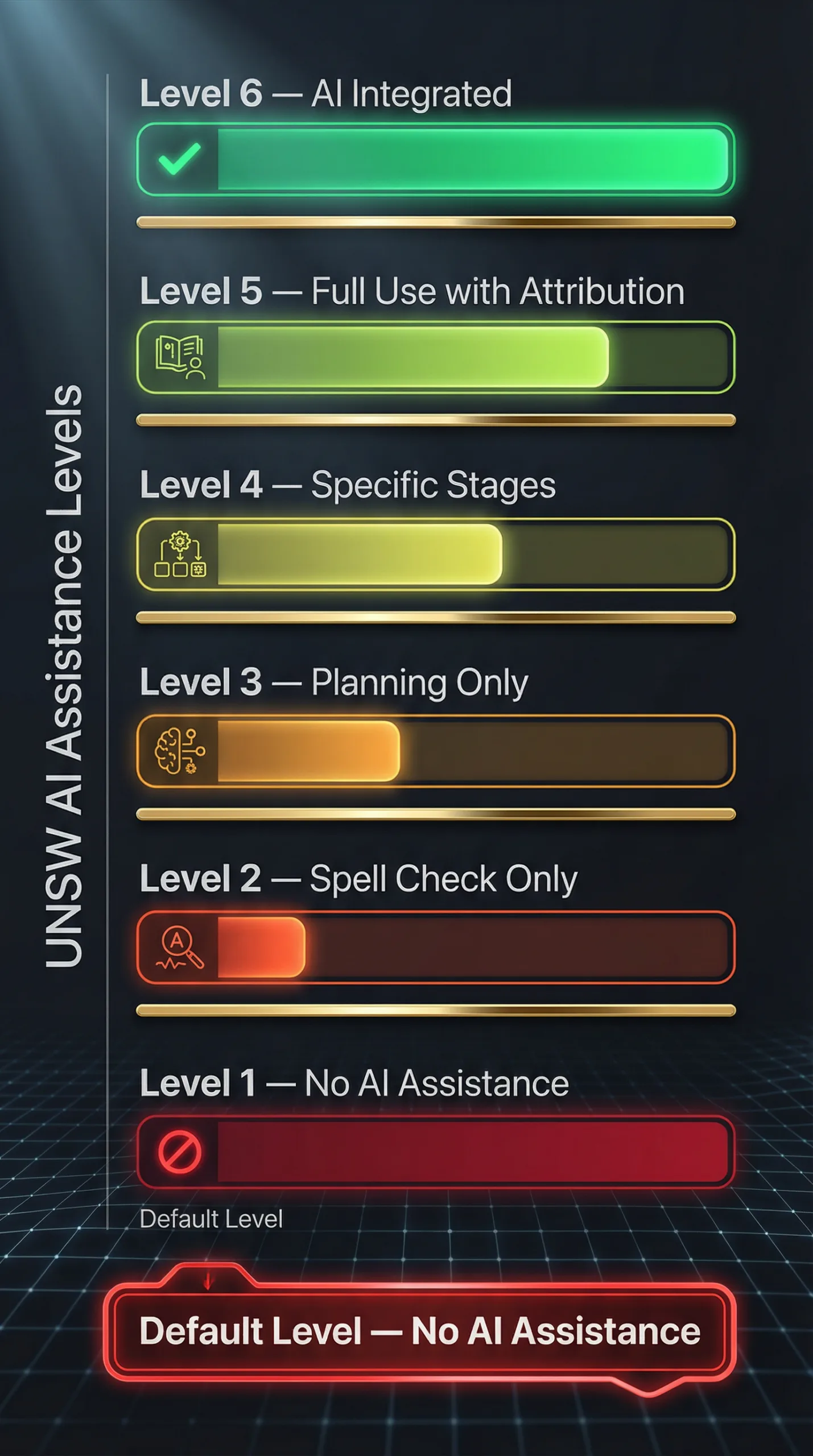

The 6 Levels of AI Assistance Framework

Every UNSW assessment carries a designated AI assistance level set by the course convenor:

Level 1 — No AI Assistance: Generative AI completely prohibited. Default level for unspecified assessments. Level 2 — Minimal Assistance: Spell check and basic grammar only. No prompt-based AI. Level 3 — Planning Only: AI for brainstorming and structure. Writing must be the student’s own. Level 4 — Production Stages: AI permitted for specific defined stages only. Level 5 — Full Use with Attribution: Extensive AI use permitted — all contributions must be cited. Level 6 — AI Integrated: Assessment specifically designed around AI as a functional component.

The critical detail: if a convenor hasn’t assigned a level, Level 1 conditions effectively apply. Students cannot assume permission where none has been granted.

UNSW AI Misconduct: What Happens If You’re Caught

UNSW’s enforcement has one feature no other institution in this comparison has formalised — the verbal explanation requirement. Suspected AI misuse triggers a direct conversation where the student must explain their submission. Students who cannot are referred to the Conduct and Integrity Office. Consequences range from failing the assessment to expulsion. UNSW investigated 704 misconduct cases in 2024 — confirming the system actively runs. Our post on why assignments get low grades covers academic risk factors students should address before reaching this point.

UNSW AI Detection: How It Works in 2026

Turnitin’s AI detection is active across all UNSW submissions — but detection scores are not standalone evidence of misconduct. They trigger human review, not automatic consequence. The verbal explanation step exists because it tests something software cannot — genuine intellectual ownership of submitted work.

UNSW ChatGPT Policy for Assignments

ChatGPT falls under generative AI across all six levels. At Level 1 — the default — it is prohibited. At Levels 3 through 6, use is permitted within the boundaries the convenor has defined. Check the course outline for every individual assessment. Under UNSW’s framework, assumptions about ChatGPT permissions will be wrong more often than right.

University of Melbourne AI Policy Explained

The University of Melbourne operates a fundamentally different model from both USYD and UNSW. Rather than a university-wide framework with defined lanes or numbered levels, Melbourne distributes AI policy control to individual faculties and subject coordinators, governed by an overarching policy — MPF1326, the Assessment and Results Policy. The official University of Melbourne AI policy is documented at unimelb.edu.au.

How UniMelb’s Faculty-Controlled Model Works

MPF1326 establishes one non-negotiable baseline: submitting AI-generated content as your own work without acknowledgment is academic misconduct. That floor applies universally. Everything above it is coordinator territory.

Subject coordinators determine whether generative AI is permitted, what it can be used for, and what disclosure is required. Some courses actively incorporate AI tools. Others ban generative AI entirely. Both are valid under MPF1326. This means a Melbourne student cannot carry a single assumption about AI permissions across their subject load — the subject guide for each individual course is the only authoritative document.

The flexibility is deliberate. The tradeoff is consistency. Students navigating multiple subjects with different AI rules report the experience feels fragmented — a pattern reflected across student forums and university publications from 2025. Getting assignment structure right within these varying requirements matters — our guide on how to structure a university assignment properly covers fundamentals that apply regardless of which AI rules are in place.

UniMelb AI Declaration: What You Need to Submit

Where Melbourne standardises is disclosure. Any permitted AI use must be formally declared — tool name, description of use, and confirmation that interaction records are available if requested. Incomplete declarations carry the same misconduct risk as no declaration at all. Melbourne adopted Turnitin’s AI detection in April 2023 — earlier than either USYD or UNSW — reflecting how seriously the institution took the detection question from the outset.

UniMelb AI Spark and AILA Tools for Students

Melbourne is developing AI infrastructure alongside its regulatory framework. AILA — an AI learning assistant — has been piloted within Melbourne’s learning management system. AI Spark provides subject-specific guidance on what’s permitted and how to engage with AI responsibly. These signal Melbourne’s long-term direction: AI embedded into the learning ecosystem, not just regulated from outside it.

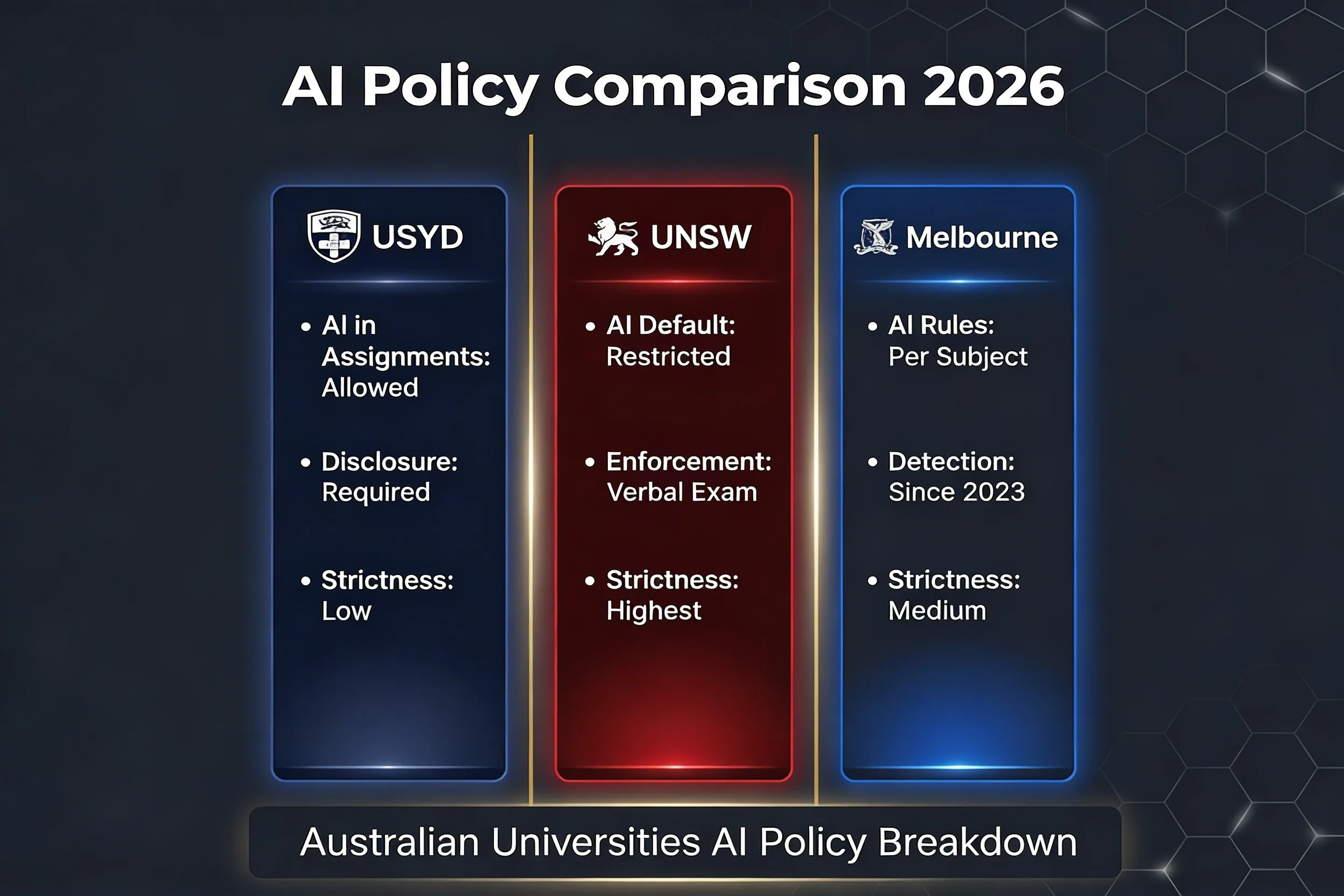

AI Policy Comparison: USYD vs UNSW vs Melbourne

For students trying to make sense of AI policy at Australian universities, this table brings the key differences into a single view.

| Feature | USYD | UNSW | Melbourne |

|---|---|---|---|

| Framework name | Two-Lane System | Levels of AI Assistance | Coordinator-controlled (MPF1326) |

| Default stance on AI | Allowed in open assessments | Restricted — leans Level 1 | Depends on coordinator |

| AI in formal exams | Banned | Banned | Banned |

| AI in assignments | Permitted with full disclosure | Depends on assigned level | Depends on subject guide |

| Disclosure mandatory | Yes — tool, version, URL, prompts | Yes — per level requirements | Yes — written declaration |

| Turnitin AI detection | Active since 2025 | Active | Active since April 2023 |

| Verbal explanation required | Not formally | Yes — central to process | Not formally |

| Instructor control | Medium | High — within defined levels | Very high |

| Documented misconduct cases | Not published | 704 cases in 2024 | Not published |

| Overall strictness | Lowest | Highest | Medium-High |

Two things stand out when AI policy at Australian universities is placed side by side.

First — the one point of genuine consensus: AI is banned in formal supervised exams across all three institutions without exception. That consistency is unlikely to change regardless of how broader policy evolves.

Second — the divergence starts the moment a student opens a take-home assignment. USYD’s default permits AI with disclosure. UNSW’s default leans restrictive. Melbourne’s default is undefined at university level — entirely dependent on the coordinator running each subject.

The verbal explanation requirement at UNSW remains the single enforcement feature with no formal equivalent elsewhere. It changes what getting caught looks like more fundamentally than any detection tool — and it is the clearest differentiator between UNSW’s approach and the AI policy Australian universities like USYD and Melbourne have built.

Strictness Ranking: Which Australian University Has the Toughest AI Rules?

The comparison table makes the broad picture clear. The ranking below makes it explicit — with the reasoning behind each position.

#1 UNSW — Most Restrictive

UNSW sits at the top of the strictness ranking on every criterion applied in this analysis. The six-level framework means every assessment has a defined AI position with no ambiguity by design. The conservative default for unspecified assessments means students cannot assume permission where none has been granted. The verbal explanation requirement adds an enforcement layer detection software cannot replicate. And the published misconduct data — 704 investigated cases in 2024 — confirms the enforcement infrastructure actively runs.

What makes UNSW’s AI policy distinctively strict among AI policy Australian universities is not that AI is always banned. Several levels permit significant AI involvement. What makes it strict is the systematic framework, the restrictive default, and consequences enforced through a process that goes beyond automated detection.

#2 University of Melbourne — Controlled Flexibility

Melbourne holds the middle position. MPF1326 is unambiguous that undisclosed AI use is misconduct. The early Turnitin adoption in April 2023 and specific declaration requirements reflect genuine institutional seriousness. What keeps Melbourne out of the top position is implementation consistency — the coordinator-controlled model produces real variation in how students experience AI policy across their degree. Among AI policy Australian universities enforce, Melbourne’s intent is strict. The day-to-day experience varies significantly depending on which subjects a student takes in any given semester.

#3 University of Sydney — Most AI-Friendly

USYD sits at the bottom of the strictness ranking — but that is a deliberate institutional choice, not a policy weakness. The two-lane system is coherent and transparent. AI is permitted in open assessments because USYD has decided AI literacy is a graduate outcome worth building. Disclosure requirements are specific. Misconduct consequences for undisclosed use are real. What places USYD third in any comparison of AI policy at Australian universities is simply that the default starting position is more open than either UNSW or Melbourne — and that reflects a considered educational philosophy, not an absence of enforcement.

Student Reality: What These AI Policies Mean for Your Assignments

Policy documents describe what universities intend. Student experience describes what actually happens. The gap between the two is where most AI policy confusion lives — and where students end up in misconduct hearings they didn’t see coming.

Across student forums, university subreddits, and published student newspaper pieces from 2025 into 2026, three consistent themes emerge regardless of institution.

The rules vary more than students expect — even within a single degree.

At Melbourne this is built into the model by design. But the same phenomenon appears at UNSW, where different convenors assign different assistance levels, and at USYD, where open assessment permissions still depend on coordinator interpretation. Students consistently arrive at a new semester assuming last semester’s AI rules still apply — and find they don’t. The AI policy Australian universities apply shifts course by course, semester by semester. Reading the subject guide for every individual course every semester is the only reliable way to know where a given assessment sits.

Disclosure requirements catch students off guard.

Most students understand AI use needs acknowledging. Fewer expect the specificity USYD and Melbourne’s declaration frameworks actually require — tool name, version, publisher, URL, description of use, and in some cases full interaction records. Incomplete disclosure carries the same misconduct risk as no disclosure at all. Getting submission requirements right from the start reduces this risk significantly — our guide on how to structure a university assignment properly covers the fundamentals every student should have in place before submitting.

“I didn’t know” carries almost no weight in a misconduct hearing.

Every Australian university now includes AI policy coverage in student orientation. Academic integrity modules addressing generative AI are embedded in onboarding at USYD, UNSW, and Melbourne. The institutional position across all three is that students have been informed — and that position holds in misconduct proceedings regardless of whether the student actually read the documentation.

The practical guidance is straightforward: check the subject guide before starting any assessment, ask the coordinator in writing if AI permissions aren’t clear, and keep records of any AI interaction used. Students who need support before a deadline becomes a crisis can find practical options in our urgent assignment help resource.

AI Detection at Australian Universities: Does It Actually Work?

Honestly — partially, inconsistently, and not in the way most students assume.

All three universities use Turnitin’s AI detection capability. Melbourne activated it in April 2023. UNSW and USYD followed as it became standard across the sector. Every submission at these institutions passes through software capable of flagging statistically likely AI-generated content. What that flag actually triggers is far more limited than most students realise.

The false positive problem is real and documented.

Turnitin identifies patterns statistically correlated with AI generation — sentence uniformity, predictable structure, certain syntactic patterns. Strong academic writing, particularly from non-native English speakers writing formally, produces the same patterns. Studies published in 2024 documented false positive rates significant enough that using detection scores as standalone misconduct evidence became indefensible. Australian universities repositioned detection as a trigger for human review — not a basis for automatic consequence. That shift is now embedded in the AI policy Australian universities formally document.

UNSW’s verbal explanation requirement addresses what software cannot.

The most substantive enforcement mechanism across all three universities is not detection software — it is UNSW’s verbal explanation process. A student can produce text that evades pattern recognition. Explaining the intellectual process behind that text in real time, to a convenor who knows the material, is significantly harder. No detection tool replicates that. Our analysis of why sample-based academic help is safer than AI-generated content explains why AI-generated submissions carry risks extending well beyond what software captures.

The longer-term response is assessment redesign.

Across all three universities, academics are redesigning assessments to reduce AI exposure rather than relying on detection after the fact. More oral presentations. More in-class written components. More process-based tasks requiring documented thinking across a project timeline. An assessment that cannot be completed by AI doesn’t need a detection layer — and the AI policy Australian universities are building for the longer term increasingly reflects that logic.

Future of AI Policy at Australian Universities (2026 and Beyond)

The frameworks USYD, UNSW, and Melbourne have built are not endpoints — they are early-stage responses to a problem still developing. Where AI policy at Australian universities goes from here matters as much as where it currently sits.

The era of blanket prohibition is not coming back.

The 2023 instinct to ban everything produced policies that were unenforceable and inconsistent. Universities have collectively moved past that position. The conversation around AI policy at Australian universities in 2026 is not about whether AI exists in the learning environment — it is about how institutions manage its role transparently. No major Australian university is seriously pursuing a return to blanket prohibition.

Assessment design is shifting faster than policy documents.

The more significant structural change is not in formal frameworks — it is in how assessments are being designed. Oral examinations are expanding. In-person written components are being reintroduced. Process-based tasks requiring documented thinking across a project timeline are becoming more common. An assessment architecture requiring demonstrated understanding in controlled settings is inherently more resistant to AI substitution than a standard take-home essay. The AI policy Australian universities enforce through rules is increasingly being reinforced by assessment structures that reduce misuse opportunity before it occurs.

AI literacy is moving from elective to core.

All three universities are embedding AI literacy into curriculum beyond policy compliance. USYD frames AI competency as a graduate outcome. Melbourne’s AI Spark and AILA position AI engagement as a learning skill. UNSW’s Level 6 assessments make AI the subject of assessment itself. The trajectory points toward AI literacy becoming a standard component of what an Australian university degree produces.

Policy standardisation across the sector is likely.

Current variation between USYD, UNSW, and Melbourne creates genuine confusion for students transferring between institutions or comparing notes across universities. Sector-wide bodies including Universities Australia have signalled interest in developing common frameworks. The institution most likely to influence any emerging standard is UNSW — its six-level system is the most specific and the best documented of the three.

Conclusion: AI Policy Australian Universities — Which Uni Suits You?

Three universities. Three frameworks. Three genuinely different answers to the same question.

UNSW has the strictest AI policy of the three institutions compared here — by structure, enforcement mechanism, and documented consequence. The six-level framework is systematic. The default leans restrictive. The verbal explanation process adds a human verification layer no other Australian university has formalized to the same degree. The 704 misconduct cases investigated in 2024 confirm the system actively operates. Among AI policy Australian universities have built, UNSW’s leaves the least room for ambiguity — intentionally.

Melbourne sits in a firmly controlled middle position. MPF1326 sets a clear and enforceable floor — undisclosed AI use is misconduct, universally. The early Turnitin adoption and detailed declaration requirements signal genuine institutional seriousness. What keeps Melbourne out of the top position is the coordinator-controlled model, which produces real variation in how students experience policy across their degree. The intent is consistent. The implementation is not always.

USYD is the most AI-friendly of the three — a deliberate institutional choice, not a policy gap. The two-lane system is coherent and transparent. The disclosure requirements are specific. The misconduct consequences are real. USYD’s AI policy asks for transparency rather than restriction — a philosophy increasingly influential in how AI policy at Australian universities is discussed at sector level.

The more useful question for students is not which university is strictest — it is which policy environment matches how they learn and what they want their degree to represent. UNSW suits students who want uniform rules consistently applied. USYD suits students building genuine AI competency. Melbourne suits students who prioritize subject-level flexibility — provided they read every subject guide carefully.

What none of these frameworks offer in 2026 is simplicity. The students who navigate AI policy at Australian universities successfully treat the subject guide as a primary document, disclosure as a serious obligation, and AI as a tool carrying full academic accountability. For students who need expert support navigating assignment requirements within these frameworks, our assignment help online service provides guidance tailored to Australian university standards.

FAQs: AI Policy at Australian Universities

Is ChatGPT banned at Australian universities?

Not categorically. The AI policy Australian universities have developed in 2026 is far more nuanced than a blanket ban.

At USYD, ChatGPT is permitted in open assessments with full disclosure — tool name, version, publisher, URL, and description of use. In supervised exams it is banned without exception. At UNSW, permission depends entirely on the assistance level assigned to the specific assessment. At Level 1 — the default for unspecified assessments — ChatGPT is prohibited. At higher levels, use is permitted within convenor-defined boundaries. At Melbourne, the answer is determined course by course by the subject coordinator. The subject guide is the only reliable source for each individual assessment.

The blanket ban era of 2023 is largely over. But “not banned” does not mean unrestricted — and that distinction is where most misconduct cases originate.

Which Australian university has the strictest AI policy?

Based on framework structure, default positions, enforcement mechanisms, and documented misconduct data, UNSW is the strictest of the three. The six-level system, conservative default, verbal explanation requirement, and 704 cases investigated in 2024 collectively place UNSW at the top of any honest ranking of AI policy at Australian universities. Melbourne sits second. USYD is the most AI-friendly of the three.

Can I use AI for assignments at USYD?

Yes — in open assessments. Take-home essays, research projects, and unsupervised tasks permit generative AI under USYD’s two-lane system. Full disclosure is required — tool name, version, publisher, URL, description of use, and in some cases prompt logs. In supervised exams, AI is banned without exception. Using AI in a secure assessment, or in an open assessment without adequate disclosure, constitutes academic misconduct under USYD’s integrity framework.

What happens if UNSW catches AI misuse?

The process begins with a verbal explanation — the student is asked to walk through their submission directly. Students who cannot adequately explain their own work are referred to the Conduct and Integrity Office. Consequences range from failing the assessment through to expulsion for serious or repeat violations. UNSW investigated 704 misconduct cases in 2024 — confirming this process runs actively, not theoretically. Students who want to avoid reaching this point can find practical academic guidance in our urgent assignment help resource.

Does University of Melbourne allow generative AI?

It depends on the subject. Melbourne’s coordinator-controlled model means each subject coordinator determines whether generative AI is permitted, what it can be used for, and what disclosure is required. Some courses actively incorporate AI tools. Others prohibit generative AI entirely. Both are valid under MPF1326. The subject guide for each individual course is the authoritative document. Any permitted AI use must be formally declared — tool name, description of use, and confirmation that interaction records are available if requested. Undisclosed AI use in any context is academic misconduct under MPF1326.